AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

Back to Blog

Cross entropy loss function11/7/2022

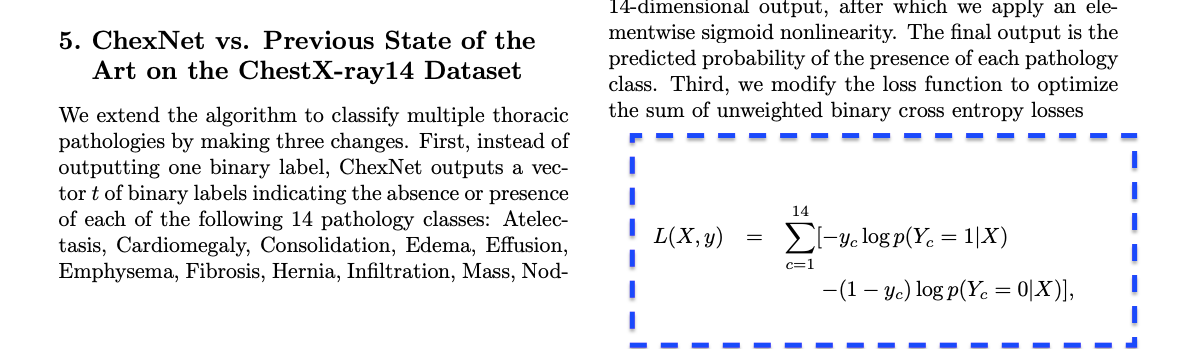

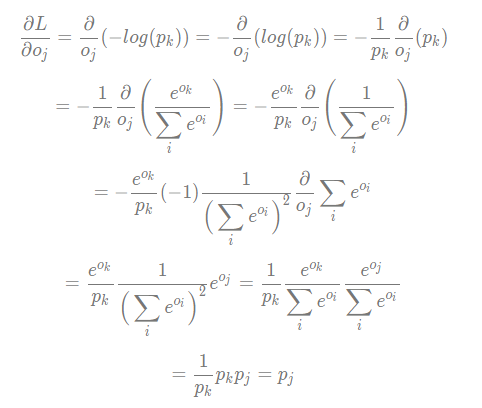

Both forms of CE are really the same, but the two different contexts can make the forms look different.Ī concrete example is the best way to explain the purely mathematical form of CE. From a neural network perspective, CE is an error metric that compares a set of computed NN output nodes with values from training data. From a purely mathematical perspective, CE is an error metric that compares a set of predicted probabilities with a set of predicted probabilities.

Additionally, CE error is also called "log loss" (the two terms are ever so slightly different but from an engineering point of view, the difference isn’t important). If you look up "cross entropy" on the Internet, one of the difficulties in understanding CE is that you'll find several different kinds of explanations. CROSS ENTROPY LOSS FUNCTION CODEThe demo program is too long to present in its entirety in this article, but the complete source code is available in the accompanying file download. This article assumes you understand the neural network input-output mechanism, and have at least a rough idea of how back-propagation works, but does not assume you know anything about CE error. In non-demo scenarios, the classification accuracy on your test data is a very rough approximation of the accuracy you'd expect to see on new, previously unseen data. After training completed, the demo computed the classification accuracy of the resulting model on the training data (0.9750 = 117 out of 120 correct) and on the test data (1.0000 = 30 out of 30 correct). Understanding the relationship between back-propagation and cross entropy is the main goal of this article. The demo displays the value of the CE error, every 10 iterations during training. CROSS ENTROPY LOSS FUNCTION FREEThe max-iteration and learning rate are free parameters. The back-propagation algorithm is iterative and you must supply a maximum number of iterations (80 in the demo) and a learning rate (0.010) that controls how much each weight and bias value changes in each iteration. The demo uses the back-propagation training algorithm with CE error. The demo loaded the training and test data into two matrices, and then displayed the first few training items. Although it can't be seen in the demo run screenshot, the demo neural network uses the hyperbolic tangent function for hidden node activation, and the softmax function to coerce the output nodes to sum to 1.0 so they can be interpreted as probabilities. CROSS ENTROPY LOSS FUNCTION TRIALThe demo program creates a simple neural network with four input nodes (one for each feature), five hidden processing nodes (the number of hidden nodes is a free parameter and must be determined by trial and error) and three output nodes (corresponding to encoded species). Before writing the demo program, I created a 120-item file of training data (using the first 30 of each species) and a 30-item file of test data (the leftover 10 of each species). CROSS ENTROPY LOSS FUNCTION FULLThe full 150-item dataset has 50 setosa items, followed by 50 versicolor, followed by 50 virginica. The goal is to predict species from sepal and petal length and width. The demo program uses 1-of-N label encoding so setosa = (1,0,0), versicolor = (0,1,0) and virginica = (0,0,1). Each item has four numeric predictor variables (often called features): sepal (a leaf-like structure) length and width, and petal length and width, followed by the species ("setosa," "versicolor" or "virginica"). Python Neural Network Cross Entropy Error Demoīehind the scenes I’m using the Anaconda (version 4.1.1) distribution that contains Python 3.5.2 and NumPy 1.11.1, which are also used by Cognitive Toolkit and TensorFlow at the time I'm writing this article. The demo Python program uses CE error to train a simple neural network model that can predict the species of an iris flower using the famous Iris Dataset. Pure Python code is too slow for most serious machine learning experiments, but a secondary goal of this article is to give you code examples that will help you to use the Python APIs for Cognitive Toolkit or TensorFlow.Ī good way to see where this article is headed is to examine the screenshot of a demo program, shown in Figure 1. The goal of this article is to give you a solid understanding of exactly what CE error is, and provide code you can use to investigate CE error. I use the Python language for my demo program because Python has become the de facto language for interacting with powerful deep neural network libraries, notably the Microsoft Cognitive Toolkit and Google TensorFlow. In this article, I explain cross entropy (CE) error for neural networks, with an emphasis on how it differs from squared error (SE).

0 Comments

Read More

Leave a Reply. |

RSS Feed

RSS Feed